|

9/3/2023 0 Comments Paraview linux

Major opcode of failed request: 150 (GLX) Errors like X Error of failed request: BadValue (integer parameter out of range for operation) Especially this condition is very unlikely to match, as we have no GPU cards on our frontends. Processes (Current value: paraview.5.3.renderingbackend.opengl2). ParaView handshake strings are different on the two connecting

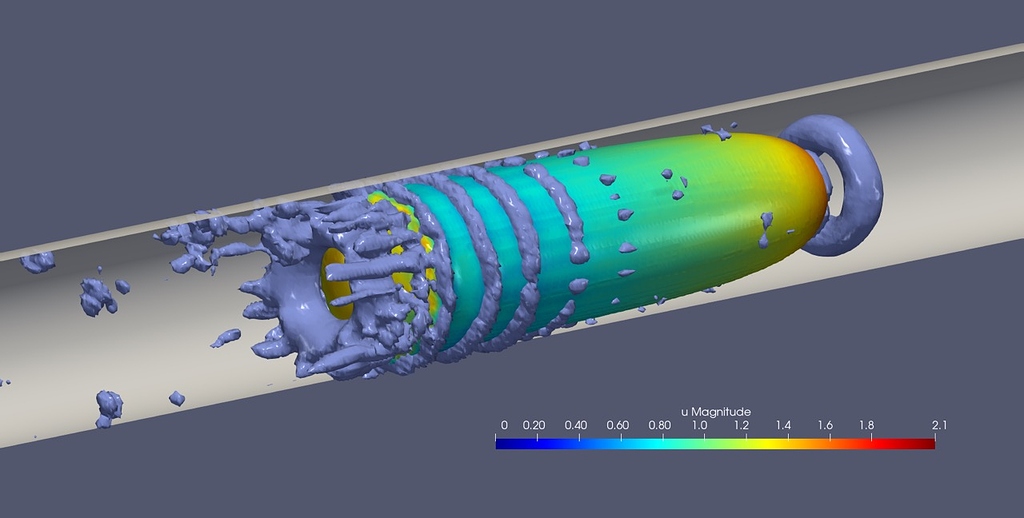

Two connecting processes (Current value: 100).ģ. vtkSocketCommunicator::GetVersion() returns different values on the Connection dropped during the handshake.Ģ. This can happen for the following reasons:ġ. The version of ParaView and pvserver must match. You are free to try to connect (or even reverse connect) your workstation/laptop ParaView to (a set of) pvserver processes running on our nodes.If you connect to multiple MPI processes but the data is still processed by one rank (see above), you would likely get faster user experience by omitting the whole remote connection stuff and opening the same data locally.However we have observed, that for small data sets (some 100s megabytes, you likely do not need parallelisation at all.) the data distribution works but looks more like data duplication, and for bigger data sets (gigabytes, you would like to have parallelisation.) D3 filter fails with allocation and/or communication issues. If your data is not distributed across processes you are free to try the D3 filter. even structured data sets could be misconfigured and became not processible in parallel.the data format reader must support parallel processing at all, see this overview.DO NOT LOAD ParaView in general as workarounds for above 100% CPU issue could affect general MPI performance.Įven if you started multiple MPI processes your data set could be processed by one single rank due to one (or many) following reasons:.Never load ParaView if you do not need it! Further information / Known issues We add some tuning variables to enable the above workaround this tuning will affect the performance of common production jobs. We found a workaround for Intel MPI for this issue thus we strongly recommend to use Intel MPI.

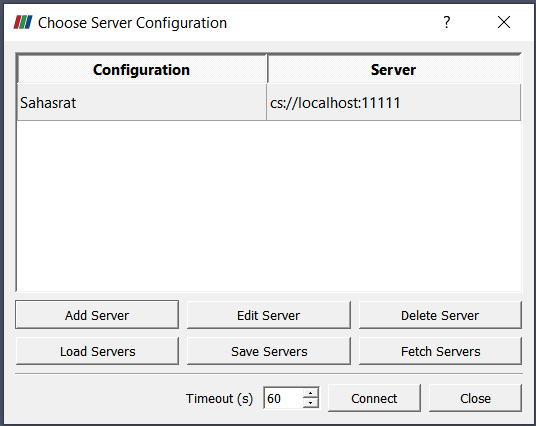

Please Note: As the MPI processes notoriously tend to consume 100% CPU, please start as low a number as possible and stop them as soon as possible. (As we use multiple MPI back end servers, the host name may vary from execution to execution.) Then in the ParaView GUI, go to 'File' -> 'Connect', and add a server according to the above settings.

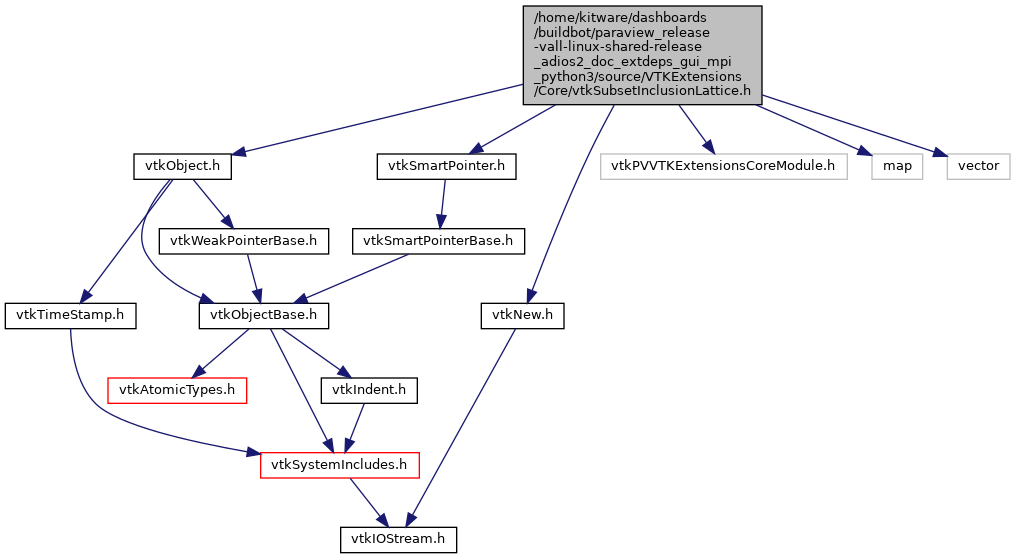

Will show something similar to: Waiting for client.Ĭonnection URL: cs://:11111Īccepting connection(s): :11111 After pvserver is being started, it will tell the connection data, e.g. In a separate terminal, load the same modules and start pvserver using $MPIEXEC. In this version, pvserver can utilise MPI to run in parallel. On some versions, a MPI-capable version of pvserver is available besides the serial version of ParaView. The included ParaView Server pvserver does not support MPI parallelisation (but often works with Intel MPI). Note: this will activate the 'binary' version of ParaView (installed from the official installer from ParaView web site). Specifying a version will list the needed modules: module spider ParaView/5.9.1-mpi ParaView is an open-source, multi-platform data analysis and visualization application.ĭepending on the version, you might have to load additional modules until you can load ParaView: module load GCC/11.2.0Īvailable ParaView versions can be listed with module spider ParaView.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed